Anthropic's new AI model worries cybersecurity sector

Cybersecurity stocks are under selling pressure and one name keeps popping up: Anthropic. With its new Claude Mythos model, the AI company has demonstrated how powerful artificial intelligence has become in detecting software vulnerabilities. What is intended as a technological breakthrough for cyber defense has triggered a crisis of confidence in the entire software sector on the markets.

Three shock waves within six weeks

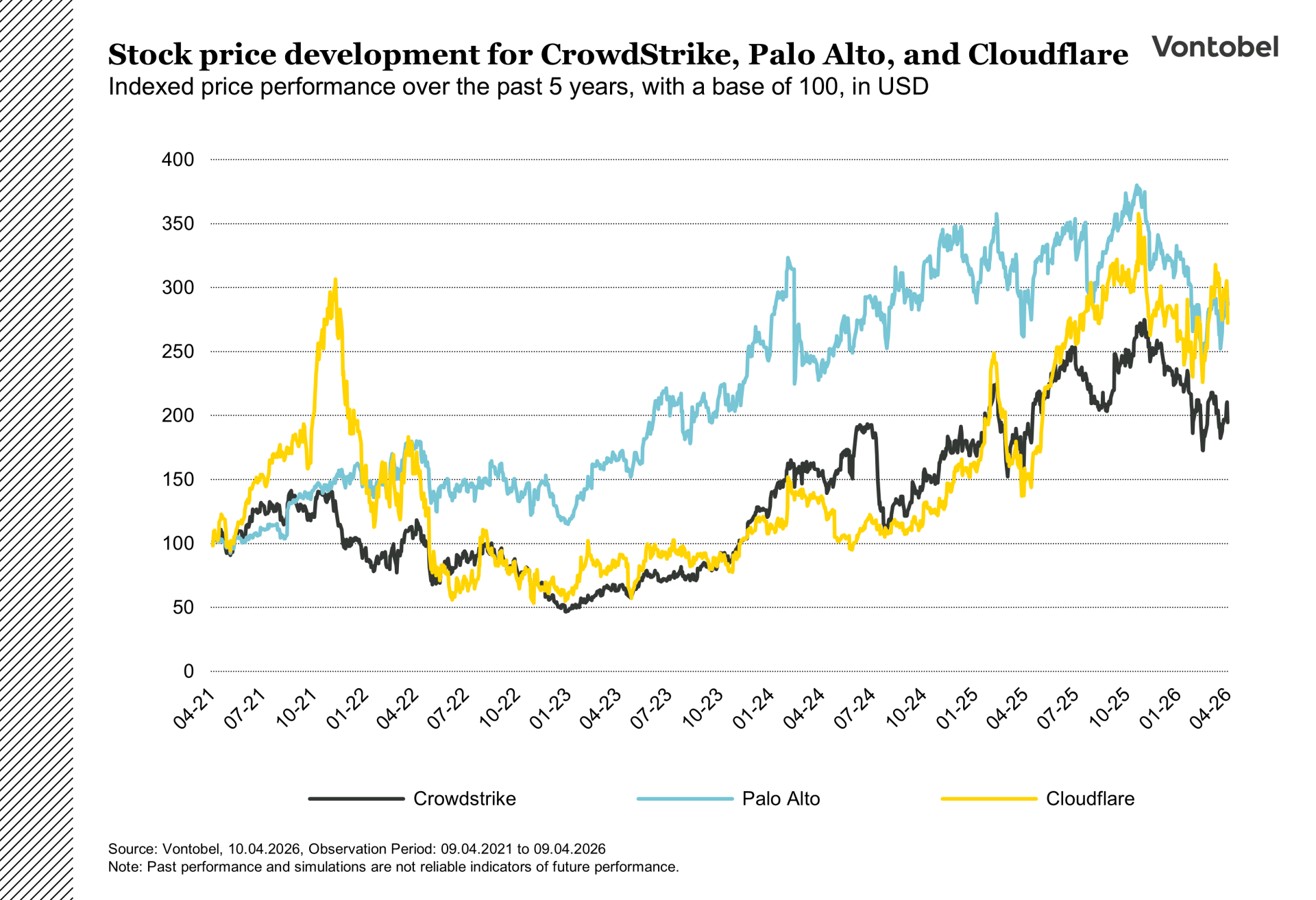

The jitters began in February when Anthropic unveiled a code scanning tool called Claude Code Security. Although it was a narrowly focused tool that analyzes source code for vulnerabilities and does not replace endpoint protection or network security, the markets reacted violently. The shares of cybersecurity companies such as CrowdStrike and Cloudflare both lost around 8 percent (CNBC, 23.02.2026). CrowdStrike CEO George Kurtz defended himself on LinkedIn: "A code scanning tool is no substitute for the Falcon platform or a comprehensive security program."

The second shock came a month later. Due to a configuration error at Anthropic, an internal blog post became public that described a new model called Claude Mythos - more powerful than any previous system and, according to the draft, fraught with "unprecedented cybersecurity risks" (Fortune, March 27, 2026). At the same time, Axios reported that Anthropic had warned senior government officials behind closed doors that Mythos significantly increased the likelihood of large-scale cyberattacks (Axios, April 7, 2026).

The third wave began in early April, when Anthropic officially unveiled the model under the name Project Glasswing - not to the public, however, but exclusively to around 40 selected organizations, including Microsoft, Amazon, Apple, Google, CrowdStrike and Palo Alto Networks (Anthropic, 07.04.2026). The technical results are remarkable: Mythos Preview identified thousands of previously unknown vulnerabilities in every major operating system and browser, some of them over two decades old. In benchmark tests, the model succeeded in 83.1 percent of cases in not only identifying a found vulnerability on the first attempt, but also demonstrating how an attacker could specifically exploit it (Axios, 07.04.2026). Cybersecurity shares suffered once again.

Upheaval or increase in demand?

The sell-out masks a more nuanced reality. Cybersecurity encompasses dozens of different product categories - from endpoint detection to identity management and DDoS protection. An AI model that finds code vulnerabilities only threatens a subset of this business. At the same time, with Glasswing's partner structure, Anthropic is making it clear that it does not want to replace existing security providers, but rather integrate them. The fact that CrowdStrike and Palo Alto Networks, of all companies, are to be included as partners underlines this intention.

The latest business figures of the two largest listed cybersecurity providers paint a different picture than the share price performance: CrowdStrike increased its revenue by 23 percent to USD 1.31 billion in the fourth quarter of the 2026 financial year and exceeded consensus estimates (CrowdStrike, 03.03.2026). Palo Alto Networks reported revenue growth of 15 percent to USD 2.6 billion in the same period, also exceeding expectations (Palo Alto Networks, February 17, 2026). The share price declines reflect not so much a current weakness in earnings, but rather uncertainty about the long-term sustainability of existing business models in the face of increasingly autonomous AI models. At the same time, if AI increases the attack surface, for example through autonomous agents, the need for security solutions may increase.

Political headwinds

What's more, Anthropic itself has come under political crossfire. The company was classified as a supply chain risk by the US Department of Defense in early March in a much-discussed affair after refusing to grant the Department of Defense unrestricted access to its AI models - in particular, Anthropic drew red lines on fully autonomous weapons and domestic mass surveillance (CNBC, 08.04.2026). The classification is historically unprecedented for a US company and is currently being litigated in various courts (Reuters, 08.04.2026). This conflict raises the fundamental question of how AI companies will navigate between commercial and ethical requirements in the future.

The latest developments underline the prevailing uncertainty: investors are pricing in a disruptive scenario that is technologically plausible but still undefined in its extent. The same AI that is threatening existing business models is also creating new attack scenarios and thus new protection requirements. Whether the market is currently assessing this ambivalence appropriately or overreacting will only become clear when the real impact on company budgets and security architectures becomes apparent.